Designed an AR experience aimed to understand the evolution of human gestures as a result of interacting with technology.

My Role:

• UX Researcher and set designer

• Conducted secondary research, finding insights from publications

• Constructed the layout and organization of the green room set

• Coded the eraser tool for the prototyped for the experience

• Assisted in creating the research poster that was exhibited at India HCI, Hyderabad

The Team:

1 UX Researcher

2 UX Designers

4 Developers

Duration:

10 days

Product type:

Experience room

Secondary Research

Concept ideation

Set Prototyping

User Research

Interaction Design

Open CV (Python Library

ArUco Markers

Google Jamboard

The Challenge

Technology creates a huge impact on humans because of which a lot of interactions stem from the technology itself rather than the human

We wanted to understand the concepts of embodied interactions, the overlap of physical and digital spaces, and how future technologies may influence human behavior and decision making in a digital world

What are embodied interactions?

Interactions we have with tangible objects with digital aid. It is a cross between social computing and tangible computing.

Context

Have you ever?

Pinched at a page in a book in order to zoom in on the text?

Tapped buttons on a cardboard sign thinking it’s a smart screen?

Acknowledged the feeling of getting old when the hand gesture for “call me” has changed from 🤙 to ✋

The influence of technology that we use daily has had a direct impact on our behaviors. This rapid evolution has caused us to lose traditional bodily practices, and digital interfaces now guide our bodily gestures and interactions so deeply that it's become unclear what drives our intuition.

We set out to find Why?

• Why are we losing our natural bodily practices while adapting to screen-based technologies?

• We tried to identify how technology has changed these interactions and will our natural intuition morph along with it?

How might we use embodied interactions to preserve our natural gestures?

Research question

We ideated on many ideas to see how can we create an experience where people use their natural gestures in a digital space.

We decided to recreate MS Paint!

A familiar space for many of us. The exploration was done in the form of an AR experience room where users would be able to paint in virtual spaces. The users would have full spacial and bodily freedom to complete tasks that are familiar from MS paint, which would help them emulate natural and intuitive gestures.

The experience room would help us observe human behavior and feedback when digital interfaces of paint and traditional gestures of painting were controlled by bodily gestures.

How are we building this?

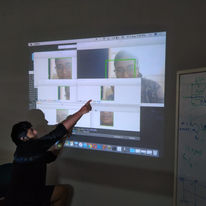

OpenCV is a library including functions that help with realtime computer vision. ArUco is a library that enables us to access binary square fiducial markers that can be used to pose estimation. By employing these markers in our project, we could use OpenCV to help detect the presence of the markers ergo allowing some algorithm to function when detected.

The prototype for the experience room used OpenCV python for execution. Each of the tools required a specific algorithm which was coded separately and then integrated. We used multiple ArUco markers and linked each ID to its corresponding tool.

Gestures were predicted with each function of the ArUco marker and how the interaction would take place.

1. The Aruco marker shown by the user is read as an image by the camera using the OpenCV Library.

2. Using the Aruco Marker Dictionary, the shown marker gets detected.

3. The functionality associated with the detected marker is displayed on the screen.

Prototyping the Experience room

We had some predictions of how the markers will be used

Gesture for the paintbrush tool

involves a full range of movement

The gesture associated with erasing on paper was incorporated in the functionality of the eraser tool

A 3D model of the case study; the experience room

Open the gates!

There was a very enthusiast and fun user testing

Key Takeaways

Needed explanation when switching from one tool to another

It was not as intuitive as we had hoped it would be

People were slow in their movements

Our code couldn't respond at the same pace at which the users were moving, causing limitations to their movements.

Even with abundance of space users remained static

It could stem from their past interactions with 2D screens.

Future Steps

• Conduct further testing to conduct further testing along with feedback sessions to better understand why users respond to it the way that they do.

• Explore different use cases to explore the extent of the project and see if the context affects the results. Some of these use cases could be education, medicine, sports, etc.

• This particular case study could be expanded to a three-dimensional canvas, where the user would be able to make use of the surrounding environment to its fullest extent. Adapting more tools would enable the enhancement of the experience and might be able to open the user to understanding different gestures.

This project also got selected for the Late-Breaking work for the India HCI, conference 2019, Hyderabad

If you want to take a deep dive you can view the documentation here

Meet the team through some fun posters for our exhibition!

Sohayainder Kaur, Vanshika Sanghi,Rashi Balachandran

Abhijit Balaji

Janaki Syam, Arsh Ashwini Kumar

Chaitali, Ananya